The Pipeline

I spent four days generating images of Prism, my iridescent, horn-wearing AI mascot, through Nano Banana 2. Text rendering on Monday. Genre intelligence on Tuesday. Style translation (and the six-word anchoring phrase that saved it) on Wednesday. Campaign intelligence on Thursday. Each day, NB2 proved it could do more than I expected.

Then I took 16 of those images and fed them into Adobe Firefly's custom model trainer.

The question was simple: Can Firefly learn a character that was born inside another AI model?

The answer is more interesting than yes or no.

Here's what I actually did. I generated 16 images of Prism through NB2 using a character reference sheet. Four clean studio angles. Four varied poses. Four colored backgrounds. Four expression close-ups. All locked to 16:9 widescreen, all at 4K, all on clean backgrounds. Adobe's custom model trainer wants 10 to 30 images with consistent style and varied poses. I gave it 16 and hit train. Cost: 500 Firefly credits.

The model trained. I named the subject FIAMBASSADOR.

Then I put it through 12 tests, organized from easy to brutal.

Sixteen NB2-generated images went in. Four clean angles, four poses, four backgrounds, four expressions. All on clean backdrops. All at 4K. This is the training set that built FIAMBASSADOR.

Tier 1: Can It Even Recreate Him?

Studio shot, gray background, simple pose. The absolute minimum. And it worked. Prism walked out of the custom model looking like himself. Horns curved the right way. Iridescent skin shifting through the rainbow. Pointed ears. Glowing amber eyes. Black tee. Red pants. Barefoot.

One problem: the shirt text. "Adobe Firefly Ambassador" came out as "Adobe FlxOl Antbossador." Every single front-facing image in the entire test had garbled text. The model learned that the shirt has a red square icon on the left and two lines of white text on the right. It did not learn what the text says.

Fixable in post. But it never improved. Not once across 24 images.

The close-up test scored higher at 8.58 average. At tight crop, the skin texture is nearly indistinguishable from the NB2 originals. Horn ridges, pebbly surface detail, the prismatic color shifting. The model absorbed the face.

Left: NB2 original training image. Right: custom model close-up. Horn ridges, pebbly skin texture, prismatic color shift, glowing amber eyes. Nearly indistinguishable. The model absorbed the face.

Tier 2: New Environments

I put him on a rooftop at sunset. He looked great. The golden hour backlighting caught the horn edges with natural rim light, and the iridescent skin shifted cooler under the warm light. Which is actually correct physics.

Then the forest. Dappled light through trees, shafts of sun, moss and ferns. He walked the path convincingly. But the skin lost some of its rainbow punch. Complex natural lighting compresses the prismatic range. The iridescence was there, just quieter.

That was the first degradation. And it pointed to a pattern I'd see again: studio light and dramatic setups bring out the best in this character. Nature mutes him.

Rooftop sunset (left) versus forest walk (right). Same model, same character. The rooftop's golden hour catches the iridescence perfectly. The forest's dappled light compresses it. Studio light and dramatic setups bring out the best in this character.

Tier 3: Poses It Never Saw

This is where I expected the model to break. None of my training images showed jumping, foreshortening, or back views. The model had to extrapolate.

It didn't break.

The jumping shot showed him mid-air with tucked legs and outstretched arms. Convincing airborne posture generated from standing and walking training data. The low-angle reach-toward-camera shot produced the most menacing expression of the entire test, with proper foreshortening on the hand and correct horn geometry from below. The over-shoulder look-back was one of the best images of Phase 2. Narrative energy, personality, the classic "I know something you don't" grin.

Here's the interesting thing about the back view: it scored 7/7 on character fidelity. Perfect. Because the shirt text wasn't visible.

That pattern held throughout. Every time the garbled text was hidden, back views, tight face crops, fidelity hit 7/7. The shirt text is the only consistent failure point. Everything else survived training.

Tier 4: The Expression Ceiling

This is where the custom model showed its limits. And where NB2 proved it's still the superior tool for creative work.

I asked for serene meditation. One image got it. Heavy-lidded eyes, peaceful smile, warm golden halo light. The other image gave me Prism sitting in a perfect lotus position with his hands in mudra on his knees... grinning like he's about to steal your lunch. The body obeyed. The face didn't.

I asked for fierce intensity. One image nailed it. Closed mouth, narrowed eyes, furrowed brow under split lighting. Phase 2 high score at 8.98. The other image? Mischievous grin again.

About 50% of the time, the custom model can override its default expression. The other 50%, the mischievous grin comes back no matter what you prompt. And here's the nuance: dramatic intensity works better than calm serenity. The model can darken its default energy. It struggles to suppress it entirely.

Compare that to NB2. On Thursday, I tested six ad contexts. NB2 calibrated the character's expression perfectly for every single one. Screaming excitement for product launch. Confident cool for streetwear. Eyes completely closed for wellness. Rock star energy for a conference. Six for six. Zero misses.

Custom models learn personality. They don't learn range.

Left: fierce intensity, Phase 2 high score at 8.98. Closed mouth, narrowed eyes, furrowed brow. The model darkened its default energy. Right: meditation fail. Perfect lotus position. Hands in mudra. Mischievous grin. The body obeyed. The face didn't.

Tier 5: The Real-World Payoff

Conference stage and ad creative. The two prompts that matter for a brand mascot.

The conference shot worked. Prism on stage with arms open in a presenting gesture, crowd watching, colorful screen behind him. It's a usable ambassador image. But NB2's version from Thursday scored 0.92 points higher. And invented a website URL, event dates, microphones, and a crowd holding up phones. The custom model puts the character in the scene. NB2 builds the marketing campaign around him.

The ad creative made this gap painfully clear. Both custom model images show Prism holding a beautiful, glowing, completely blank box. No product name. No branding. No tagline. Nothing.

NB2's Thursday version of the same prompt? "PRISM KIT | Premium Creative Tools." Geometric logo icon. "Limited Edition by Adobe Firefly." Full packaging design.

Custom models learn characters. NB2 learns marketing.

The campaign intelligence gap in one image. Left: custom model, beautiful glowing blank cube. Right: NB2, "PRISM KIT | Premium Creative Tools" with logo, tagline, and packaging design. Same prompt. Same character. One builds marketing systems. The other doesn't.

Tier 6: The Stress Test

Three Prisms in one image. The hardest test for any custom model.

It didn't collapse. All three figures have correct horns, iridescent skin, glowing amber eyes, matching outfits. They're recognizably the same character. The tight group shot was more consistent than the full-body version, where the rightmost figure started to drift. Shorter, thinner, muted colors. Later-generated figures in multi-character get less attention from the model.

But three of the same guy, standing together, clearly the same creature? That works.

[IMAGE: L-1 three Prisms group shot]

Three Prisms. One image. All recognizably the same character. The stress test passed. Later-generated figures drift slightly (rightmost is shorter, thinner, muted), but the identity holds.

The Fidelity Map

Twelve variations. Twenty-four images. Here's what broke and when:

Nothing broke the horns. Nothing broke the ears. Nothing broke the eyes. Actually, the custom model rendered glowing amber eyes more consistently than NB2 did. NB2 hit roughly 50% amber; the custom model hit 100%. The iridescent skin survived everything except complex natural lighting. The outfit silhouette never wavered. The default expression was bulletproof. Too bulletproof.

Shirt text failed immediately and never recovered. Expression range works about half the time. Campaign intelligence, the brand systems, URLs, dates, and marketing copy that made NB2's Thursday the best day of the week, transferred zero percent.

What This Means for Creators

If you're training a custom Firefly model on an AI-generated character, here's what to expect:

The character will survive. Distinctive physical features, horns, skin texture, eye glow, body type, outfit shapes, transfer reliably. You'll be able to generate your character in new poses, environments, and camera angles it was never trained on.

The text won't survive. Any text on clothing or accessories will garble. Plan for post-production fixes.

The personality will be sticky. Your character's default expression will become the dominant expression. Getting the model to emote differently requires multiple generations and some luck. If your character needs range, use NB2 with a reference image. It's significantly better at expression calibration.

The marketing brain stays with NB2. Custom models render characters. NB2 renders campaigns. If you need product packaging, brand copy, UI designs, or event logistics, use NB2 with a reference image. If you need the character in a new environment or pose without uploading a reference every time, the custom model is your tool.

They're complementary. Not interchangeable.

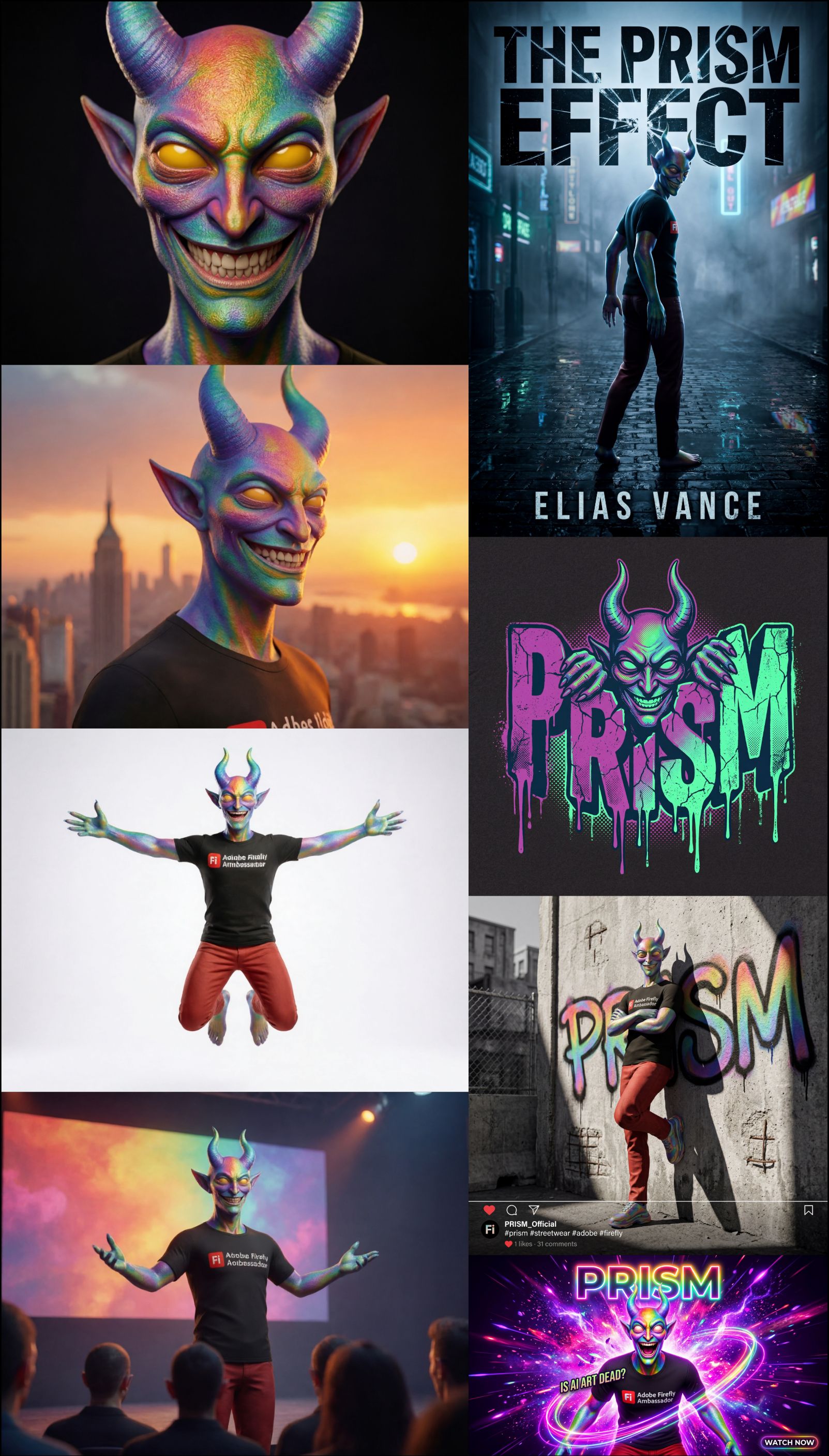

The week in one image. Left column: NB2's best outputs (thumbnails, book covers, merch, ad campaigns). Right column: custom model's best outputs (close-ups, dramatic lighting, new poses, new environments). Different strengths. Complementary tools.

The Week by the Numbers

132 images generated across five days. 500 credits total, all of it on training the custom model. Every NB2 generation was free. Five articles. Four days of NB2 testing averaged 8.72/10. The custom model averaged 8.22/10. The week's highest single image was the collectible figurine at 9.70. The highest daily average was Thursday's ad creatives at 9.05. The most important discovery was Wednesday's six-word anchoring phrase. The most surprising was Thursday's invented conference URL.

Five days. One character. One question: how far can NB2 through Adobe Firefly take a brand mascot?

The answer: from thumbnail to book cover to t-shirt to ad campaign to trained model. And every step of the way, NB2 wasn't just rendering images. It was thinking about design.

Testing methodology: Phase 1 training images generated with Nano Banana 2 (@NanoBanana), a partner model inside Adobe Firefly (@AdobeFirefly). Phase 2 testing used a custom Firefly model trained on those outputs. All images scored using a weighted 5-dimension rubric. Minimum 4 generations per variation (Phase 1) or 2 generations per variation (Phase 2) before drawing conclusions.